ICASSP 2020 International Conference on Acoustics, Speech, and Signal Processing

Here is what we think are the most relevant upcoming audio-related conferences. And which sessions you should attend at the ICASSP 2020.

To keep up-to-date with the latest on audio-technology for our software development, we follow other researchers studies and we usually visit many conferences. Sadly, this time, we cannot attend them in person. Nevertheless, we can visit them virtually, together with you. Here is what we think are the most relevant upcoming audio-related conferences:

- ICASSP (https://2020.ieeeicassp.org/)

- EUSIPCO (https://eusipco2020.org/)

- INTERSPEECH (http://www.interspeech2020.org/)

Let’s take a more detailed look at,

ICASSP 2020 International Conference on Acoustics, Speech, and Signal Processing

Date: 04th – 8th of May, 2020

Location: https://2020.ieeeicassp.org/program/schedule/live-schedule/

This is a list of panels we recommend during the ICASSP 2020:

Date: Tuesday 05th of May 2020

- Opening Ceremony (9:30 – 10:00h)

- Plenary by Yoshua Bengio on “Deep Representation Learning” (15:00 – 16:00h)

- Note: may be pretty technical, for deep learning enthusiastic

- Note: He’s one of the fathers of deep learning

Date: Wednesday 06th of May 2020

- Show and Tell 5 (16:30 – 18:30h)

- Interesting: Real-Time Voice Conversion

- Interesting: Video-driven Speech Reconstruction

- Interesting: Real-Time Voice Conversion

Date: Thursday 07th of May 2020

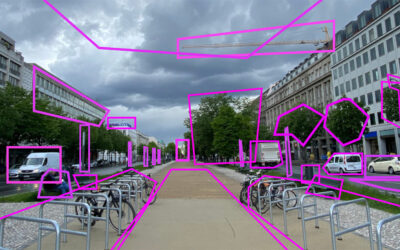

- Image/Video Synthesis, Rendering and Visualization (08:00 – 10:00h)

- Machine Learning for Speech Synthesis (11:45 – 13:45h)

- Interesting: Boffin TTS: Few-Shot Speaker Adaptation by Bayesian Optimization – A.K.A.: a quite obscure title corresponding to “learn to synthesize a voice using 5-10 minutes of training content”

- Voice Conversion (15:15 – 17:15h)

- Interesting: End-to-End Voice Conversion via Cross-model Knowledge Distillation for Dysarthric Speech Reconstruction – A.K.A.: deepfake a voice of someone who cannot articulate sounds anymore (parkinson, ALS, brain injuries etc.)

We’re looking forward to seeing you there!

The Digger project aims:

- to develop a video and audio verification toolkit, helping journalists and other investigators to analyse audiovisual content, in order to be able to detect video manipulations using a variety of tools and techniques.

- to develop a community of people from different backgrounds interested in the use of video and audio forensics for the detection of deepfake content.

Related Content

Train Yourself – Sharpen Your Senses

Verification is not just about tools. Essential are our human senses. Whom can we trust, if not our own senses?

In-Depth Interview – Sam Gregory

Sam Gregory is Program Director of WITNESS, an organisation that works with people who use video to document human rights issues. WITNESS focuses on how people create trustworthy information that can expose abuses and address injustices. How is that connected to deepfakes?

In-Depth Interview – Jane Lytvynenko

We talked to Jane Lytvynenko, senior reporter with Buzzfeed News, focusing on online mis- and disinformation about how big the synthetic media problem actually is. Jane has three practical tips for us on how to detect deepfakes and how to handle disinformation.