Verification is not just about tools. Essential are our human senses. Whom can we trust, if not our own senses?

About The Digger Team

About The Digger Team

The team working on the Digger Project consists of Fraunhofer IDMT (audio forensics technology), Athens Technology Center (product development) and Deutsche Welle (project lead and concept development). The project is co-funded by Google DNI.

The team working on the Digger Project consists of Fraunhofer IDMT (audio forensics technology), Athens Technology Center (product development) and Deutsche Welle (project lead and concept development). The project is co-funded by Google DNI.

This joint collaboration between a technology company, a research institute and the innovation unit of a broadcaster is quite unique. We combine different perspectives and expertise to come to an efficient and user centered solution. This is also what verification is all about: collaboration.

Ruben Bouwmeester (Project Lead, DW):

What is your motivation to work on the Digger project?

The technology to create synthetic video content could be a threat to society. Specifically when it becomes available to a larger audience, the Deepnude app was just a wake-up call. Digger, to me, is a contribution to our quest for the truth. A toolset for journalists to verify online content and put it into perspective for the audience at large. And possibly some better understanding for those who want to learn more about deepfakes and how they affect society.

How can the Digger project help to detect manipulated or synthetic media?

Within Digger we are creating a toolset that varies from visual video verification assistants to audio forensic tools to detect video manipulation. Our challenge is to make sure these tools are user centered and easy to understand / work with so that future users can actually easily detect video manipulation.

Julia Bayer (Journalist, Project Manager, DW)

What is your motivation to work on the Digger project?

Manipulated videos like shallow fakes and synthetic media like deepfakes are a big challenge we journalists have to deal with already. Verification is a crucial part of our job and the process that we need for this is not changing but gets more technical. With the Digger project we can support journalists and investigators and give them a technical toolset to debunk manipulated content and be prepared for the challenge.

How can the Digger project help to detect manipulated or synthetic media?

Digger helps to understand the architecture and technology behind synthetic media. We share our knowledge with the public and collaborate with experts to create the best software accessible to verify manipulated video and synthetic media.

Patrick Aichroth (Head of Media Distribution and Security, Fraunhofer IDMT)

What is your motivation to work on the Digger project

Free and democratic societies depend on science, rational argument and reliable data. Being able to distinguish between real and fake information is essential for our societies and for our freedom. However, fakes can be created more and more easily, and both “shallow” and “deep” fakes represent a growing threat that requires the use of various measures, including technical solutions. Detecting audio fakes and manipulation is one important element to that, and we are happy to provide and further improve our audio forensics technologies for this purpose.

How can the Digger project help to detect manipulated or synthetic media?

Digger provides a unique opportunity to explore and establish the combination of human- and machine-based detection of manipulated and synthetic material. Adapting and integrating automatic analysis into real-life verification workflows for journalists is a big challenge, but is also key for the future of content verification.

Luca Cuccovillo (Audio Forensic Specialist ,Fraunhofer IDMT)

What is your motivation to work on the Digger project?

Audio forensics is a discipline which, when the fate of a person is at stake in a courtroom, helps the judges in their quest for truth. Deepfakes, however, have their largest impact not in the courtrooms, but in the Internet and in the news.

I decided to participate in the Digger project to provide my expertise not only to the judges, but to the whole society, with the hope that journalists and normal citizens may use our platform to defend themselves against fake content and ‘fake news’.

How can the Digger project help to detect manipulated or synthetic media?

There is a large gap between the analysis possibilities available for a courtroom and the ones available for public citizens and journalists: Most of the forensic methods at present would be inconclusive or not applicable for the public.

Digger is the best chance we have for fixing this issue and reducing the gap. If we succeed, then plenty of analyses methods developed in the last years would become applicable, and we could finally fight against disinformation on equal grounds.

Stratos Tzoannos (Head Developer, ATC)

What is your motivation to work on the Digger project?

As a software developer, I realize that the power of technology is scary when it comes to deepfakes. On the other side, it is a huge opportunity to understand and use the same technology for social good, trying to tackle such cases and save people from manipulation. From a technical point of view, I find it extremely challenging the task to deal with advanced algorithms and tools to make a step forward in the fight against misinformation.

How can the Digger project help to detect manipulated or synthetic media?

The first step is to understand what deepfakes are and how they are synthesized. After gaining a good knowledge of this technology, the next step is to apply reverse engineering in order to produce detection algorithms for deep fakes. Moreover, the implementation of different tools to facilitate multimedia fact-checking could also add more weapons to the fight against malicious actions involving synthetic media.

Danae Tsabouraki (Project Manager, ATC)

What is your motivation to work on the Digger project?

Both from a personal and professional perspective, I feel that manipulated and synthetic media content like deepfakes may pose a serious danger to democracy, but also to other aspects of our private and public life. At the same time, most journalists are short of widely accessible, commercial tools that will help them detect deepfakes. By participating in the Digger project along with Deutsche Welle and Fraunhofer IDMT, we aspire to further contribute to the ongoing effort of developing and providing technologies and tools that help journalists detect truth from fiction.

How can the Digger project help to detect manipulated or synthetic media?

Digger takes a twist in its approach to manipulated/synthetic media detection by adding audio forensics in the mix. By using state-of-the-art audio analysis algorithms along with video verification features, Digger will provide journalists with a complete toolkit for detecting deepfakes. Digger also aims to bring together a community of experts from different fields to share their knowledge and experiences leading to a better understanding of the challenges we face.

The Digger project aims:

- to develop a video and audio verification toolkit, helping journalists and other investigators to analyse audiovisual content, in order to be able to detect video manipulations using a variety of tools and techniques.

- to develop a community of people from different backgrounds interested in the use of video and audio forensics for the detection of deepfake content.

Related Content

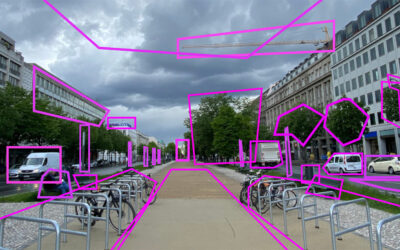

Train Yourself – Sharpen Your Senses

In-Depth Interview – Sam Gregory

Sam Gregory is Program Director of WITNESS, an organisation that works with people who use video to document human rights issues. WITNESS focuses on how people create trustworthy information that can expose abuses and address injustices. How is that connected to deepfakes?

In-Depth Interview – Jane Lytvynenko

We talked to Jane Lytvynenko, senior reporter with Buzzfeed News, focusing on online mis- and disinformation about how big the synthetic media problem actually is. Jane has three practical tips for us on how to detect deepfakes and how to handle disinformation.